Chapter 1

The Foundation. How modern AI models work under the hood.

What is GPT? The Foundation of Generative AI

GPT (Generative Pre-trained Transformer) is the model family that launched the modern AI era. Developed by OpenAI, GPT models are trained on vast amounts of text to predict the next word in a sequence — but this simple objective produces remarkably sophisticated capabilities. Understanding GPT is foundational to understanding all modern LLMs. The core insight is that by learning to predict what comes next in billions of sentences, the model implicitly learns grammar, facts about the world, reasoning abilities, and even some capacity for creative thought.

How GPT Is Trained

GPT models go through a two-phase training process that transforms a blank neural network into a capable assistant:

The model reads billions of web pages, books, and articles, learning to predict the next word. This unsupervised phase gives the model broad world knowledge, language understanding, and basic reasoning. It requires massive compute — thousands of GPUs running for weeks or months. The result is a “base model” that is remarkably fluent but not yet aligned with human intentions. It can complete text convincingly, but it does not know how to be a helpful assistant.

The pre-trained model is then refined using human feedback to be helpful, harmless, and honest. Humans rate model responses, a reward model learns from these ratings, and reinforcement learning optimizes the model's behavior. This is what transforms a raw text predictor into a useful assistant. The process is called RLHF (Reinforcement Learning from Human Feedback) and is the key innovation that made ChatGPT feel fundamentally different from earlier language models.

Key Concepts

Tokens

LLMs don't process words — they process tokens (subword pieces). For example, “tokenization” becomes “token” + “ization.” Roughly 1 token is about three-quarters of a word. A 128K context window can hold approximately 96,000 words, which is roughly 200 pages of text. Understanding tokens is important because pricing, rate limits, and context window sizes are all measured in tokens, not words.

Temperature

Temperature controls randomness in the model's output. Temperature 0 is deterministic — the model always picks the most likely next token, producing consistent and predictable outputs. Temperature 1 is creative — the model samples broadly from the probability distribution, producing more varied and surprising text. For factual tasks like data extraction or classification, use low temperature. For creative writing, brainstorming, or generating diverse options, use higher temperature.

Context Window

The context window is the total text (input + output) the model can process at once. Think of it as working memory — everything the model can “see” simultaneously. Larger windows enable analyzing entire codebases, processing book-length documents, or maintaining very long conversations without losing context. However, cost scales with usage — more tokens in the context means higher per-request costs. Modern models range from 128K tokens (GPT-4o) to 1M+ tokens (Claude 4.6, Gemini).

Quick Start: Your First API Call

The fastest way to understand what LLMs can do is to call one. Below are minimal working examples for the two most popular APIs — OpenAI and Anthropic. Both take just a few lines of Python to get a response from a frontier model.

from openai import OpenAI

client = OpenAI()

response = client.chat.completions.create(

model="gpt-4o",

messages=[

{"role": "system", "content": "You are a helpful assistant."},

{"role": "user", "content": "Explain transformers in 3 sentences."}

],

temperature=0.7

)

print(response.choices[0].message.content)import anthropic

client = anthropic.Anthropic()

message = client.messages.create(

model="claude-sonnet-4-20250514",

max_tokens=1024,

messages=[

{"role": "user", "content": "Explain transformers in 3 sentences."}

]

)

print(message.content[0].text)Applications

Chatbots

Powering conversational interfaces that can understand and respond to user queries in natural language. Modern chatbots built on GPT can handle customer support, sales inquiries, and internal knowledge retrieval, often resolving questions that previously required human agents.

Content Generation

Creating articles, summaries, marketing copy, and creative writing based on prompts. GPT models can adapt their tone and style to match brand guidelines, generate multiple variations for A/B testing, and produce first drafts that humans can refine rather than writing from scratch.

Code Assistance

Helping developers write, debug, and understand code through tools like GitHub Copilot, Claude Code, and Codex CLI. These tools can generate entire functions from natural language descriptions, explain complex codebases, suggest fixes for bugs, and automate repetitive refactoring tasks.

Understanding Transformers: The Brain Behind GPT

Transformers are the backbone of models like GPT. They are neural networks designed to handle sequential data, such as text, and are known for their ability to capture long-range dependencies in data.

Types of Transformers

- Focus on understanding text (e.g., sentiment analysis, classification)

- Ideal for tasks where the goal is to analyze input text rather than generate new text

- Examples include BERT, RoBERTa, and DistilBERT

- Specialized for generating text

- Perfect for tasks like answering questions or writing essays

- Examples include GPT-3, GPT-4, and LLaMA

- For our knowledge assistant, a decoder-only transformer like GPT is the best fit

- Used for tasks that require both understanding and generating text

- Ideal for translation, summarization, and question-answering

- Examples include T5, BART, and mT5

LLMs - Large Language Models

Large Language Models (LLMs) are the backbone of modern AI systems, enabling applications like chatbots, content generation, and code assistance. Let's explore the architecture and key components that make these models work.

Architecture of LLMs

Core Components and Processing Flow

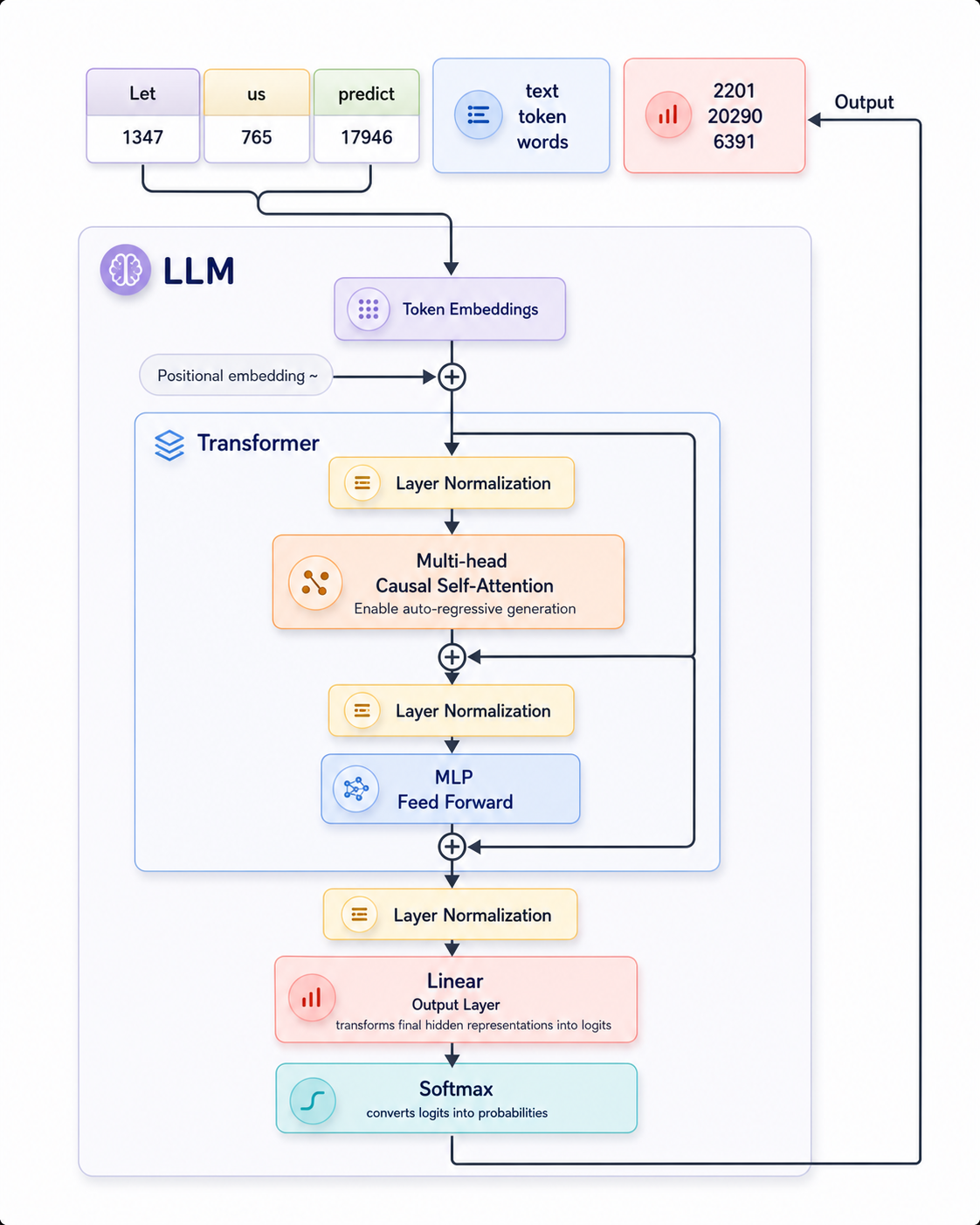

As shown in the diagram, an LLM processes text through several key stages:

Input Tokenization

The input text (e.g., "Let us predict") is converted into numerical token IDs (1347, 765, 17946) that the model can process. Each token represents a word or subword in the model's vocabulary.

Token Embeddings

These token IDs are transformed into dense vector representations (embeddings) that capture semantic meaning. This is the first step inside the LLM processing pipeline.

Positional Embedding

Information about the position of each token is added (marked with "~" in the diagram) and combined with the token embeddings. This helps the model understand the sequence order since transformers process all tokens simultaneously.

Transformer Block

The core of the LLM contains transformer blocks with several components:

- Layer Normalization: Stabilizes inputs at various stages

- Multi-head Causal Self-Attention: Allows tokens to attend to previous tokens in the sequence, enabling auto-regressive generation

- MLP Feed Forward: Processes each position independently with a multi-layer perceptron

- Residual Connections: The "+" circles in the diagram represent residual connections that help with gradient flow during training

Output Processing

After the transformer processing:

- Final Layer Normalization: Normalizes the output of the transformer block

- Linear Output Layer: Transforms the final hidden representations into logits (raw prediction scores) for each token in the vocabulary

- Softmax: Converts these logits into probabilities, allowing the model to predict the next token

Output Generation

The model produces output with statistics as shown in the diagram: text tokens (2201), token IDs (20290), and word counts (6391). This output becomes part of the context for generating the next token in an auto-regressive manner.

Text Generation Mechanism

- The model processes the input tokens ("Let us predict" in the example)

- It predicts the next most likely token based on the probability distribution from the softmax

- The predicted token is appended to the input sequence

- This new, longer sequence becomes the input for the next prediction

- The process repeats, with the model generating text one token at a time

- The causal self-attention mechanism ensures each prediction only considers previous tokens, not future ones

LLM Types and Categories

Large Language Models come in various types, each designed for specific use cases and with different capabilities. Understanding these categories helps in selecting the right model for your AI assistant.

Text-Based LLMs (Traditional)

These models focus primarily on text generation and understanding, forming the foundation of most AI assistants.

| Type | Description | Examples | Open/Proprietary |

|---|---|---|---|

| Base LLMs | General text generation models without specific optimization | GPT-3, Llama 2, Falcon | Both |

| Chat/Instruction-tuned | Optimized for conversation and following instructions | Claude 3.5 Sonnet, GPT-4o, Llama 3 | Both |

| Reasoning-focused | Enhanced for logical thinking and step-by-step problem solving | Claude 3.7 Sonnet (reasoning mode), Gemini 1.5 Pro | Mostly Proprietary |

Multimodal Models

These models can process and generate content across multiple modalities, including text, images, audio, and video.

| Type | Description | Examples | Open/Proprietary |

|---|---|---|---|

| Vision Language Models (VLMs) | Process images and text together | GPT-4V, Claude 3 Opus, Gemini, Flamingo | Mostly Proprietary |

| Audio-Text Models | Process audio and text | Whisper, AudioLLM, Anthropic Claude Sonnet | Both |

| Video-Text Models | Understand and generate content about videos | Gemini 1.5, GPT-4o, VideoLLaMA | Both |

| Universal Multimodal Models | Handle multiple modalities (text, image, audio, video) | Gemini 1.5 Pro, GPT-4o, Claude 3 Opus | Proprietary |

Document/Knowledge-Focused Models

These models specialize in processing documents and structured knowledge, making them ideal for knowledge assistants.

| Type | Description | Examples | Open/Proprietary |

|---|---|---|---|

| Document Processing LLMs | Specialized for PDF and document understanding | Claude 3.5 Sonnet, GPT-4o, PDF.ai, Docquery | Both |

| RAG-optimized Models | Enhanced for retrieval-augmented generation | Qdrant, Kimi, Perplexity | Both |

| Knowledge Graphs + LLMs | Combine structured knowledge with LLM capabilities | Neo4j + LLM integrations, GraphRAG | Both |

Agent & Specialized Models

These models are designed for specific tasks or domains, offering enhanced performance for particular use cases.

| Type | Description | Examples | Open/Proprietary |

|---|---|---|---|

| Agentic LLMs | Enable tool use and autonomous action | Anthropic's Claude Code, AutoGPT, LangChain agents | Both |

| Function-calling Models | Optimized for API interactions & structured outputs | GPT-4 Turbo, Claude 3 Opus, Gemini | Both |

| Domain-specific LLMs | Optimized for specific fields | BloombergGPT (finance), MedPaLM (medicine), CodeLlama (programming) | Both |

| Small Specialized Models | Tiny models optimized for specific tasks | Phi-3 (3.8B), DistilBERT, TinyLlama | Mostly Open Source |

Current Models: A Comprehensive Guide

The AI model landscape is split between two broad camps: proprietary models from companies like OpenAI, Anthropic, and Google, which are accessed exclusively through APIs and subscriptions, and open-weight models from Meta, Mistral, DeepSeek, and others, whose model weights are publicly released so anyone can download, run, and fine-tune them.

Understanding the differences matters. Proprietary models often lead on benchmarks and offer polished product ecosystems, but lock you into a vendor. Open-weight models give you full control over deployment, privacy, and customization, but require your own infrastructure. The best choice depends on your use case, budget, compliance requirements, and how much control you need over the model itself.

OpenAI maintains two distinct model families. The GPT line is designed for fast, general-purpose text and multimodal generation — think chatbots, content creation, and code assistance. The o-series is built for deliberate reasoning: these models “think” step-by-step before answering, making them dramatically better at math, science, and complex logic problems, at the cost of higher latency and token usage.

GPT Family (General Purpose)

GPT-4o

The multimodal flagship that dominated through most of 2024. GPT-4o accepts both text and images as input and generates text output. It was the workhorse model for ChatGPT and API developers alike, offering a strong balance of capability, speed, and cost. For many use cases, it remains a reliable baseline.

GPT-4.1

A breakthrough release featuring a 1 million token context window — enough to process entire codebases or book-length documents in a single prompt. Comes in three sizes: GPT-4.1 (full), GPT-4.1 mini, and GPT-4.1 nano. Delivered major gains in coding and instruction following, and was designed as the primary model for API developers building production applications.

GPT-5

A fundamentally different architecture: a unified system that intelligently routes between a fast model for simple questions and a deeper reasoning model for complex ones. Users do not need to choose between “GPT” and “o-series” — GPT-5 decides automatically. It achieved 45% fewer hallucinations than GPT-4o and set new state-of-the-art benchmarks across coding, math, writing, and visual perception.

o-Series (Reasoning Models)

o3

A reasoning model that thinks step-by-step before responding, producing a chain-of-thought trace that dramatically improves accuracy on hard problems. Beyond reasoning, o3 can agentically use tools — web search, code execution, and image generation — making it capable of multi-step research and problem-solving workflows. Scored 88.9% on the AIME 2025 math competition, a benchmark previously thought to require human-level mathematical insight.

o4-mini

Released the same day as o3, and remarkably, it outscored o3 on math benchmarks — achieving 92.7% on AIME 2025 despite being smaller and significantly cheaper. At $1.10 input / $4.40 output per million tokens, o4-mini is the most cost-effective reasoning model available, making advanced reasoning accessible for high-volume applications.

OpenAI Product Ecosystem

| Product | Description |

|---|---|

| ChatGPT Plans | Free / Plus ($20/mo) / Pro ($200/mo) / Business / Enterprise. Each tier unlocks higher usage limits and access to newer models. |

| Responses API | Replaced the older Assistants API. The primary way developers build applications on OpenAI models, with built-in support for tool use and structured outputs. |

| Agents SDK | Python and TypeScript libraries for orchestrating multi-step AI agent workflows, including handoffs between specialized agents. |

| Codex CLI | An open-source terminal-based coding agent that can read your codebase, propose changes, and execute commands — similar in concept to Claude Code. |

| Realtime API | Enables live voice conversations with sub-second latency, powering voice assistants and real-time translation. |

| Deep Research | An agentic feature that autonomously browses the web, synthesizes information from dozens of sources, and produces comprehensive research reports. |

Anthropic's Claude models are known for strong instruction following, nuanced writing, and a safety-focused design philosophy. The lineup spans three tiers: Haiku for speed and cost efficiency, Sonnet for the best balance of performance and price, and Opus for maximum intelligence on the hardest tasks. All Claude models support extended thinking (chain-of-thought reasoning), tool use, and vision.

Claude Sonnet 4

The balanced workhorse of the Claude 4 generation. Supports hybrid reasoning — it can deliver both instant responses for simple queries and extended thinking for complex ones within the same model. Excellent for code reviews, customer support agents, content generation, and any workflow that needs a mix of speed and depth.

Claude Opus 4

The most intelligent model at launch, scoring 72.5% on SWE-bench Verified (a benchmark measuring real-world software engineering ability). Built specifically for sustained, multi-hour agentic workflows — it can autonomously plan, execute, and iterate on complex coding tasks. Powers Claude Code for autonomous background tasks.

Claude Haiku 4.5

Fast and cheap without sacrificing too much capability. The default model for free-tier Claude users. Offers the best price-to-performance ratio in the Claude lineup, making it ideal for high-volume applications like classification, extraction, and simple Q&A where sub-second latency matters.

Claude Opus 4.5

A landmark release: the first AI model to break 80% on SWE-bench Verified, scoring 80.9%. Introduced the “effort” parameter, allowing developers to tune computational intensity — use less effort for simple tasks (saving cost) and maximum effort for the hardest problems. Also came with a 67% price cut compared to Opus 4, making top-tier intelligence far more accessible.

Claude 4.6 Generation

Both Sonnet 4.6 and Opus 4.6 shipped with 1 million token context windows at standard pricing — no surcharge for the extended context. This made it possible to process entire codebases, lengthy legal documents, or months of conversation history in a single prompt without paying a premium.

Claude Opus 4.7

The latest and most capable Claude model. Improved software engineering and vision capabilities over Opus 4.6. Supports up to 128K output tokens, enabling generation of very long documents, complete file rewrites, and detailed analyses in a single response.

Anthropic Product Ecosystem

| Product | Description |

|---|---|

| Claude Code | An agentic terminal-based coding tool that can navigate codebases, make multi-file edits, run tests, and commit changes. Went GA in May 2025 and rapidly became a leading developer tool. |

| Claude Agent SDK | Released September 2025. A framework for building multi-agent orchestration systems, with support for handoffs, guardrails, and tracing. |

| Extended Thinking | Allows Claude to reason step-by-step in a visible thinking trace before answering, dramatically improving performance on math, logic, and analysis tasks. |

| Prompt Caching | 90% discount on cached input tokens. Reuse long system prompts, documents, or conversation history across requests without paying full price each time. |

| Computer Use | Claude can see and interact with computer screens — clicking, typing, scrolling — enabling automation of GUI-based workflows. |

| Citations | Responses can include precise citations pointing to specific passages in provided documents, enabling verifiable answers. |

| Tool Use | Claude can call external functions and APIs with fine-grained streaming, enabling real-time integration with databases, search engines, and custom tools. |

Claude Plans

| Plan | Price | Details |

|---|---|---|

| Free | $0 | Access to Haiku 4.5 with usage limits |

| Pro | $20/mo | Access to all models, higher limits, extended thinking |

| Max 5x | $100/mo | 5x the usage of Pro, priority access |

| Max 20x | $200/mo | 20x the usage of Pro, highest priority |

| Team | Per-seat | Collaboration features, admin controls, shared billing |

| Enterprise | Custom | SSO, SCIM, audit logs, custom data retention, dedicated support |

Google's Gemini models are natively multimodal — they were trained from the ground up on text, images, audio, and video together, rather than bolting vision onto a text model after the fact. This gives them particularly strong performance on tasks that mix modalities, like analyzing videos, understanding charts, or processing audio recordings.

Gemini 1.5 Pro

Pioneered the ultra-long context window with 2 million tokens — the largest at the time of release. This means it can process 1 hour of video, 11 hours of audio, or 700,000+ words in a single prompt. Transformed what was possible for document analysis, meeting transcription, and codebase understanding without RAG.

Gemini 2.0 Flash

Outperforms 1.5 Pro on most benchmarks at twice the speed. Introduces native multimodal output — it can generate images mixed with text in a single response, and produce steerable text-to-speech with 30+ voices. Also features native tool use, meaning the model can call search, code execution, and other tools without external orchestration.

Gemini 2.5 Pro

Google's most capable reasoning model. Features a “Deep Think” mode that uses parallel thinking techniques, considering multiple hypotheses simultaneously rather than following a single chain of thought. This approach can surface insights that sequential reasoning might miss, making it particularly strong on open-ended analysis and research tasks.

Gemini 2.5 Flash

The first Flash-tier model with thinking capabilities. Features dynamic reasoning — it automatically adjusts how much processing time to spend based on query complexity. Simple factual questions get instant answers; complex multi-step problems trigger deeper reasoning. This makes it an efficient general-purpose model that does not waste compute on easy tasks.

Google AI Product Ecosystem

| Product | Description |

|---|---|

| Google AI Studio | A free, browser-based tool for prototyping prompts, testing models, and generating API keys. The fastest way to start building with Gemini. |

| Vertex AI | Google Cloud's enterprise ML platform with a catalog of 200+ models (including Gemini, Llama, Mistral, and more). Offers fine-tuning, evaluation, deployment, and monitoring. |

| NotebookLM | An AI research tool that lets you upload documents and have conversations grounded in your sources. Known for its “Audio Overviews” feature that generates podcast-style discussions about your documents. |

| Agentspace | Google's enterprise platform for building and deploying AI agents that can search across company data, take actions in business tools, and automate workflows. |

Open Weight vs. Open Source vs. Proprietary

Proprietary models (GPT, Claude, Gemini) are only available through APIs — you cannot see or download the model weights. Open-weight models (Llama, Mixtral) release the trained weights so anyone can download and run them, but may restrict commercial use or not release training data/code. Truly open source means weights, training code, data, and documentation are all available under a permissive license. Most models called “open source” are technically open-weight.

Meta Llama 4 (April 2025)

A major architectural shift for Meta: Llama 4 moved to Mixture-of-Experts (MoE), where only a subset of the model's parameters are active for any given token. This means dramatically lower inference costs while maintaining high capability.

| Variant | Total Params | Active Params | Context | Highlight |

|---|---|---|---|---|

| Llama 4 Scout | 109B | 17B | 10M tokens | Longest context window of any model ever released |

| Llama 4 Maverick | 400B | 17B | 1M tokens | Natively multimodal, trained on 30T+ tokens |

Why Llama matters: Meta's decision to release Llama openly drove the cost of AI toward hardware and electricity rather than per-token API fees. It created a massive ecosystem of fine-tuned variants for specialized tasks — medical, legal, coding, and more — and gave organizations the ability to run capable models on their own infrastructure with full data privacy.

Mistral

A French AI company that has consistently punched above its weight. Mixtral 8x22B uses a sparse Mixture-of-Experts architecture with 141B total parameters but only 39B active per token, delivering strong performance at relatively low inference cost. Mistral has carved out a niche by offering competitive models with particularly strong multilingual capabilities.

| Product | Description |

|---|---|

| Le Chat | Consumer chatbot interface, similar to ChatGPT or Claude |

| La Plateforme | Developer API for accessing Mistral models programmatically |

| Codestral | Specialized code generation model, optimized for programming tasks |

DeepSeek

A Chinese AI company that shocked the industry in early 2025. DeepSeek-R1 achieved reasoning capabilities comparable to OpenAI's o1 through a fundamentally different approach: pure reinforcement learning without supervised fine-tuning. Where other reasoning models relied on massive amounts of human-labeled chain-of-thought data, DeepSeek-R1 learned to reason through trial and error alone.

This was a watershed moment because it proved that frontier-level performance does not require massive compute budgets or proprietary data pipelines. DeepSeek open-sourced the model, enabling researchers worldwide to study and build upon their approach. The release triggered a reassessment of AI cost assumptions across the entire industry.

Google Gemma 4

Google's open-weight model line, released under the Apache 2.0 license (fully permissive for commercial use). Available in 2B and 4B parameter sizes with a 256K context window. Designed to run efficiently on phones, Raspberry Pi devices, and other edge hardware. Proves that useful AI does not always require cloud-scale infrastructure.

xAI Grok 3

Built by Elon Musk's xAI and trained with access to X (formerly Twitter) data, giving it a distinctive real-time awareness of public discourse. Features a “Big Brain” mode for extended reasoning on difficult problems, similar to other reasoning-mode models. Available through the xAI API and the Grok chat interface on X.

Cohere Command R+

An enterprise-focused model optimized specifically for Retrieval-Augmented Generation (RAG). Its standout feature is inline citations: when answering questions from provided documents, it includes precise references to source passages, significantly reducing hallucinations and making answers verifiable. Cohere also offers Embed v4 for multimodal embeddings, enabling search across both text and images.

Perplexity Sonar

Perplexity is not a model company in the traditional sense — it is an AI-native search product that combines multiple underlying models with real-time web search. Sonar is their API offering that lets developers build search-augmented AI applications. Every response is grounded in live web results with source citations, making it a fundamentally different approach from standalone language models.