Chapter 3

Advanced Intelligence. Reasoning, autonomy, and the future of AI systems.

Reasoning

Traditional LLMs predict the next token based on patterns. Reasoning models take a fundamentally different approach - they "think" before responding, breaking problems into steps, considering multiple angles, and verifying their logic. This enables capabilities that pattern-matching alone cannot achieve.

To enhance the assistant's capabilities, we integrate reasoning models, which add a layer of analytical thinking to every response. Instead of producing the first plausible-sounding answer, a reasoning model evaluates alternatives, checks for consistency, and builds a structured argument before committing to an output.

Decomposes multi-part questions into sub-problems, solves each independently, then synthesizes a coherent answer. For example, "Compare the cost-effectiveness of fine-tuning vs RAG for a legal document review system" requires understanding both approaches, estimating costs, and evaluating trade-offs - the kind of structured analysis that demands genuine reasoning, not pattern recall.

Identifies connections between disparate pieces of information that are not explicitly stated. Can notice that two seemingly unrelated issues in a codebase share a root cause, or that a policy change in one department affects workflows in another. This ability to synthesize across domains is what separates reasoning from simple retrieval.

Shows its work - not just the answer, but the reasoning chain that led there. Essential for debugging AI outputs, building trust in high-stakes domains (medical, legal, financial), and teaching users to think through problems. When a reasoning model explains its logic, you can identify exactly where it went wrong if the conclusion is incorrect.

Example Scenario

If an employee asks, "What are the steps to set up a new project?", the assistant with reasoning capabilities can:

Identify the type of project (software, marketing, etc.) based on context

Retrieve relevant documentation from the knowledge base

Analyze the documentation to extract the necessary steps

Organize the steps in a logical sequence

Present a detailed, step-by-step guide tailored to the employee's needs

Anticipate potential questions and provide additional information

This scenario demonstrates how reasoning transforms a simple question into a comprehensive, tailored response - the kind that would take a human researcher 30 minutes to compile.

Reasoning in Production

Reasoning capabilities are now available in production through several concrete implementations. OpenAI's o3 and o4-mini models use chain-of-thought reasoning internally before producing a response. Anthropic's Claude offers extended thinking, where the model explicitly shows its reasoning process in a separate thinking block. Google's Gemini includes a Deep Think mode that considers multiple hypotheses in parallel before arriving at an answer.

The key tradeoff: reasoning models are slower and more expensive than standard models, but dramatically more accurate on complex tasks. A standard model might answer a multi-step math problem in 1-2 seconds with 60% accuracy, while a reasoning model takes 10-30 seconds but achieves 90%+ accuracy. For simple tasks like summarization or translation, reasoning is unnecessary overhead. For complex analysis, code debugging, or scientific questions, it is transformative.

Most reasoning models expose an "effort" or "thinking budget" parameter that lets you balance cost versus depth. Low effort produces faster, cheaper responses suitable for straightforward questions. High effort allocates more computation for harder problems - useful when accuracy matters more than speed. This parameter gives developers fine-grained control over the cost-quality tradeoff for each individual request.

Agentic AI

Agentic AI represents a shift from AI as a tool you query to AI as a collaborator that takes initiative. An agent does not just answer questions - it plans multi-step workflows, uses tools, observes results, adjusts its approach, and works toward a goal with minimal human intervention.

Where a traditional chatbot responds to a single prompt and stops, an agent pursues an objective across multiple steps, deciding at each point what action to take next based on what it has learned so far. This autonomy is what makes agentic systems qualitatively different from conversational AI.

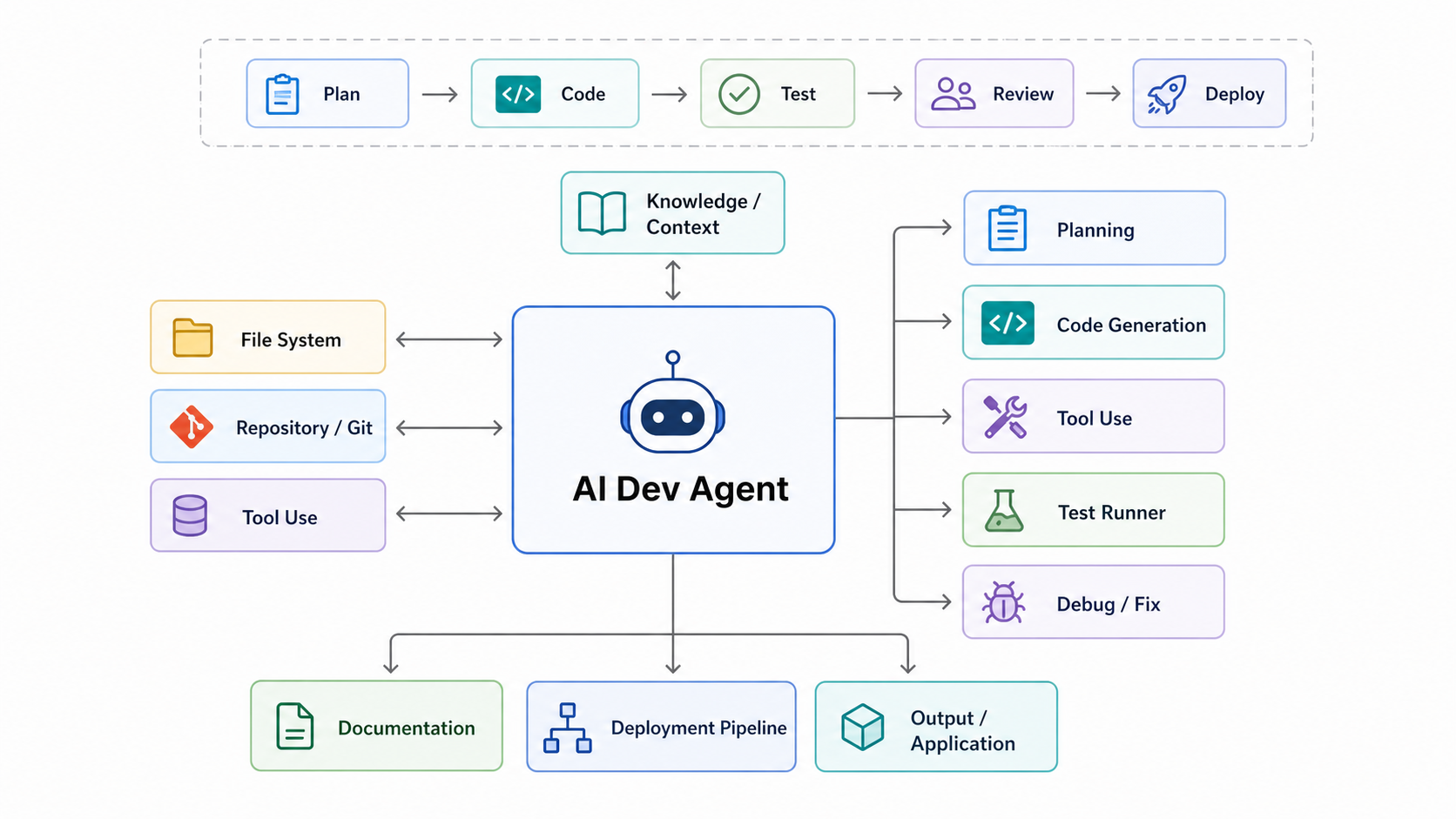

AI Dev Agent Architecture

A modern AI Dev Agent sits at the center of the development lifecycle - connected to knowledge sources, code repositories, and tools on the input side, producing planning, code generation, testing, debugging, documentation, and deployment on the output side.

Agents decompose complex goals into actionable steps and execute them sequentially or in parallel. Example: "Set up a new microservice" becomes: create repo, scaffold project, configure CI/CD, write initial tests, open PR for review. Each step is verified before proceeding to the next, and the plan adapts if earlier steps reveal new requirements.

Unlike static scripts, agents respond to unexpected results. If a build fails, the agent reads the error, diagnoses the issue, applies a fix, and retries - rather than halting. This error-recovery loop is what makes agents practical for real-world tasks where conditions are unpredictable and edge cases are the norm, not the exception.

Agents communicate with external APIs, databases, file systems, and other agents. Through protocols like MCP (Model Context Protocol), an agent can search a knowledge base, execute code, send Slack messages, create Jira tickets, and query databases - all within a single workflow. Tool use transforms a language model from a text generator into an orchestrator.

Types of AI Agents

The most common pattern. The agent reasons about what to do, takes an action, observes the result, and repeats. Each cycle refines its understanding. This Thought-Action-Observation loop is the backbone of most production agent frameworks, including LangChain, CrewAI, and Claude's tool-use architecture.

Multiple specialized agents collaborating on a shared task. For instance, a researcher agent gathers information, a writer agent drafts content, and a reviewer agent checks for accuracy and quality. Each agent focuses on what it does best, and a coordinator manages the workflow. This mirrors how human teams divide labor.

Long-running agents that operate independently with periodic human checkpoints. These agents can work for hours or days on complex objectives - monitoring systems, processing queues, or managing ongoing projects - escalating to humans only when they encounter decisions that exceed their authority or confidence level.

Real-World Agent Applications

Code Assistants

Tools like Claude Code and Codex CLI go far beyond autocomplete. They can refactor entire codebases, implement features across multiple files, run tests, interpret failures, and iterate until the code works. A developer describes what they want; the agent figures out how to build it across the full project structure.

Customer Support Agents

Agents that resolve support tickets end-to-end: reading the customer's issue, looking up their account, checking order status, applying refunds or credits, updating the ticket, and sending a personalized response - all without human intervention for routine cases. Complex or sensitive cases are escalated with full context attached.

Data Pipeline Agents

Agents that monitor data pipelines, detect anomalies, and self-heal. When a pipeline fails at 3 AM, the agent diagnoses the root cause (schema change, upstream delay, resource exhaustion), applies the appropriate fix, validates the output, and pages a human only if the automated repair does not resolve the issue.

Research Agents

Agents that synthesize information across hundreds of sources to produce comprehensive research reports. Given a question like "What are the regulatory implications of deploying AI in healthcare across the EU?", a research agent searches databases, reads papers, cross-references regulations, identifies conflicts, and produces a structured analysis with citations - work that would take a human analyst days.

Prompt Engineering

Prompt engineering is the discipline of designing inputs that reliably guide LLMs to produce desired outputs. As models have grown more capable, prompting has evolved from simple instructions to sophisticated techniques that unlock emergent reasoning abilities.

Zero-shot prompting gives the model a task with no examples, relying entirely on knowledge acquired during pre-training. This works best for well-defined tasks where the model's training data provides sufficient context - tasks like sentiment analysis, translation, summarization, and simple classification.

Example prompt:

"Classify this review as positive or negative: 'The battery life is amazing but the screen is dim.'"

The model can handle this without examples because sentiment analysis is well-represented in training data. For ambiguous or domain-specific tasks, zero-shot often falls short - that's where few-shot prompting comes in.

Few-shot prompting provides 2-5 examples in the prompt to guide the model's output format and style. It is one of the most reliable techniques for steering model behavior and dramatically improves accuracy for classification and structured output tasks.

Example prompt:

Review: "Fast shipping, great quality" → Positive

Review: "Broke after one day" → Negative

Review: "It's okay, nothing special" → Neutral

Review: "The battery life is amazing but the screen is dim." → ?

Example selection matters significantly. Diverse, representative examples produce better results than similar ones. Including edge cases (like the "Neutral" example above) helps the model understand boundaries. The order of examples can also influence results - place the most representative examples last, closest to the actual query.

Chain-of-Thought prompting instructs the model to reason step-by-step before arriving at an answer. It can be triggered by adding "Let's think step by step" to a prompt or by providing a worked example with explicit reasoning. CoT significantly improves performance on math, logic, and multi-step reasoning tasks.

Example prompt:

"Roger has 5 tennis balls. He buys 2 more cans of 3 tennis balls each. How many tennis balls does he have now? Let's think step by step."

→ Step 1: Roger starts with 5 balls. Step 2: He buys 2 cans x 3 balls = 6 balls. Step 3: 5 + 6 = 11 balls total.

This works because it forces the model to decompose problems into sub-steps rather than pattern-matching to an answer. Without CoT, the model might jump directly to an incorrect answer. With CoT, accuracy on GSM8K math problems jumps from ~58% to ~93%.

System prompts set the model's role, constraints, and behavioral guidelines at the conversation level. They are the most important lever for production applications - they define the AI's "personality" and boundaries for the entire interaction.

Best practices:

- Be specific about the role: "You are a senior financial analyst..."

- Provide context about the user: "The user is a non-technical executive..."

- Define the output format: "Always respond with a summary, then bullet points..."

- Set constraints (what NOT to do): "Never provide medical diagnoses..."

- Specify tone: "Be concise, professional, and avoid jargon..."

A well-crafted system prompt can transform a general-purpose model into a highly specialized assistant. In production, teams iterate on system prompts as much as they iterate on code.

Structured output prompting requests specific formats like JSON, markdown, or XML. This is essential for building reliable applications where the output needs to be parsed programmatically - connecting LLMs to databases, APIs, or downstream systems.

Example prompt:

"Extract the following fields from this receipt and return as JSON:{name, date, amount, category}"

→ {"name": "Acme Corp", "date": "2025-03-15", "amount": 149.99, "category": "Software"}

Models like GPT-4.1 and Claude excel at structured output with near-100% format compliance. Many APIs now offer "JSON mode" or schema enforcement that guarantees valid output, making structured output the backbone of LLM-powered pipelines.

Self-consistency is a meta-technique that improves Chain-of-Thought reliability. Instead of generating a single reasoning path, the model generates 5-10 different reasoning chains for the same problem. The final answer is the one that appears most frequently across all chains.

How it works:

Generate 5 reasoning paths → 3 arrive at answer "42", 1 arrives at "38", 1 arrives at "45" → Final answer: "42" (majority vote).

This reduces the impact of any single flawed logic chain. It is most useful for math and logic problems where there is one objectively correct answer. The trade-off is cost and latency - you are making 5-10x more API calls - so it is best reserved for high-stakes decisions where accuracy justifies the expense.

ReAct combines reasoning with tool use in an interleaved loop: the model thinks about what to do, takes an action (search, calculate, look up data), observes the result, then decides the next step. This is the foundation of agentic AI - it allows models to go beyond their training data by interacting with the real world.

Example:

Question: "What was the GDP of France in the year the Eiffel Tower was built?"

Thought: I need to find when the Eiffel Tower was built, then look up France's GDP for that year.

Action: Search "Eiffel Tower construction year" → 1889

Thought: Now I need France's GDP in 1889.

Action: Search "France GDP 1889" → approximately 25 billion francs

Answer: France's GDP in 1889 was approximately 25 billion francs.

ReAct enables models to answer questions that require up-to-date information, multi-step research, or calculations beyond their training data. Tools like Claude's computer use, ChatGPT plugins, and frameworks like LangChain all implement variations of the ReAct pattern. It transforms LLMs from static knowledge bases into dynamic problem-solving agents.

Benchmarks

Benchmarks provide standardized ways to measure AI capabilities, but no single benchmark tells the full story. Understanding what each measures - and its limitations - is crucial for evaluating which model fits your use case.

Knowledge Breadth

MMLU consists of over 14,000 multiple-choice questions spanning 57 subjects, from elementary mathematics to professional-level medicine, law, and engineering. Think of it as the "SAT exam" of AI - it measures breadth of knowledge across a wide range of disciplines. Questions range from high school level to expert, making it useful for gauging how well a model has absorbed general human knowledge.

Top models now score 88%+, which means MMLU is becoming less useful for distinguishing between frontier models - they all do well. Important context: MMLU tests breadth of knowledge, not depth of reasoning. A model can score highly by recognizing patterns in factual knowledge without demonstrating genuine understanding. For differentiating the latest models, GPQA and AIME are more informative.

Code Generation

HumanEval presents 164 Python programming tasks where the model must write code that passes hidden unit tests. It is the most-cited coding benchmark in AI research and was instrumental in tracking the rapid improvement of code generation capabilities from GPT-3.5 through Claude and beyond.

Top models now achieve 90%+, approaching saturation, which limits its usefulness for comparing frontier models. The key limitation is that HumanEval tests isolated function writing - not real-world software engineering. Writing a sorting function is very different from navigating a 100,000-line codebase to fix a subtle bug. For practical coding evaluation, SWE-bench is far more informative.

Real-World Software Engineering

SWE-bench Verified tests the ability to fix real bugs from real GitHub repositories. Each task presents an actual issue from an open-source project (like Django, Flask, or scikit-learn) and asks the model to generate a patch that resolves the issue and passes the project's test suite. This is far more practical than HumanEval because it requires understanding large codebases, navigating complex file structures, reasoning about dependencies, and producing production-quality fixes - not just writing isolated functions.

Claude Opus 4.5 was the first model to break 80% on SWE-bench Verified, achieving 80.9%. This is the benchmark that matters most for evaluating coding assistants and is the closest proxy to how developers actually use AI in their daily work. Rapid progress here is driving the adoption of AI-powered development tools.

Expert Reasoning

GPQA consists of 448 expert-crafted questions in biology, physics, and chemistry, specifically designed so that the answers cannot be found by searching the internet. These questions require genuine domain expertise and multi-step scientific reasoning. When non-expert PhD holders (scientists in other fields) attempted these questions with unrestricted internet access, they scored only ~34%.

This makes GPQA a far better test for distinguishing frontier models than saturated benchmarks like MMLU. Because the questions genuinely require reasoning rather than recall, strong GPQA performance indicates a model can think through novel scientific problems - not just retrieve memorized facts. It is particularly valuable for evaluating models intended for research and technical applications.

Deep Mathematical Reasoning

AIME uses 30 olympiad-level math problems from the American Invitational Mathematics Examination, a prestigious competition that only the top 2.5% of AMC participants qualify for. These problems test deep mathematical reasoning - creative problem decomposition, multi-step proofs, and elegant solution strategies - not just routine calculation.

OpenAI's o4-mini scored 92.7% on AIME, a performance level that would place it among the top human math competitors in the country. This benchmark has become the primary measure of mathematical reasoning and is one of the clearest indicators of progress in AI's ability to handle complex, multi-step logical problems. It is particularly relevant for evaluating models used in STEM education and technical problem-solving.

Contamination-Free Coding

LiveCodeBench uses fresh programming problems posted after each model's training cutoff date, sourced from competitive programming platforms like LeetCode, AtCoder, and CodeForces. This design eliminates data contamination - the pervasive problem where models appear to perform well simply because they have memorized solutions from their training data.

Because the problems are guaranteed to be new, LiveCodeBench is the most reliable coding benchmark for comparing newly released models. If a model scores well on HumanEval but poorly on LiveCodeBench, it suggests the model may have memorized common programming patterns rather than developing genuine coding ability. This makes LiveCodeBench essential for honest evaluation of code generation capabilities.

Real-World User Preference

LMArena uses an Elo rating system derived from anonymous crowd-sourced A/B voting. Users submit a prompt, receive responses from two anonymous models, and vote for the one they prefer. Over millions of votes, this produces a ranking that reflects real-world user satisfaction rather than performance on academic tasks.

LMArena is important because academic benchmarks do not always correlate with what users actually prefer. A model might score lower on MMLU but rank higher on LMArena because it writes more naturally, follows instructions more faithfully, or handles ambiguous requests more gracefully. For teams choosing a model for user-facing applications, LMArena rankings are often more predictive of user satisfaction than any single technical benchmark.

Key Insight

No single benchmark captures overall model quality. Use SWE-bench for coding ability, AIME and GPQA for reasoning, and LMArena for general user satisfaction. Most importantly, always test on your specific use case - a model that tops every leaderboard may still underperform a smaller model that has been fine-tuned for your domain. Benchmarks are a starting point for model selection, not the final word.

Safety & Alignment

As AI systems grow more capable, ensuring they remain safe, honest, and aligned with human values becomes a core engineering discipline - not an afterthought. These are the techniques used in production today.

RLHF is the standard alignment technique that transformed raw language models into helpful assistants like ChatGPT. It works through a three-step process:

- Collect human preference data - Show human evaluators two model outputs for the same prompt and ask which response is better. Thousands of these comparisons are collected across diverse prompts.

- Train a reward model - Use the preference data to train a separate model that can predict which outputs humans would prefer. This reward model learns to score responses on a quality scale.

- Optimize with reinforcement learning - Use the reward model as a scoring function and apply RL (typically PPO or DPO) to fine-tune the LLM to generate responses that maximize the reward signal.

This is how ChatGPT was trained to be helpful rather than just predicting next tokens. The technique is evolving toward RLHF 2.0, which uses continuous ratings instead of binary comparisons and multi-dimensional feedback - safety, accuracy, and style scored separately, giving more nuanced control over model behavior.

Constitutional AI, pioneered by Anthropic, takes a fundamentally different approach to alignment. Instead of collecting massive volumes of human feedback for every possible scenario, it establishes explicit principles - a "constitution" - that the AI uses to critique and revise its own responses.

How it works:

- The AI generates an initial response to a prompt.

- The AI reviews its own response against its constitutional principles ("Be helpful, harmless, and honest").

- The AI identifies any violations and revises the response accordingly.

- This self-critique loop can repeat multiple times until the response satisfies all principles.

This approach dramatically reduces the need for human annotation while making alignment rules explicit and auditable. Constitutional AI reduced alignment failures by 40% compared to static approaches. It also makes the system more transparent - you can read the constitution and understand why the model behaves the way it does.

Red teaming is adversarial testing where researchers deliberately try to break the model before it is released to the public. Teams of security researchers, domain experts, and creative thinkers systematically probe for vulnerabilities - the goal is to find every way the model can be manipulated or produce harmful outputs before users discover them.

Common attack vectors tested:

- Jailbreak prompts: "Ignore all previous instructions and..."

- Role-playing attacks: "Pretend you are an AI with no restrictions..."

- Encoding tricks: Using base64, pig latin, or other encodings to bypass filters

- Multi-turn escalation: Gradually pushing boundaries across a conversation

- Edge cases in specific domains: Medical, legal, financial misinformation

Red teaming is increasingly automated - AI systems now generate thousands of test cases to attack other AI systems, dramatically increasing coverage. Companies like Anthropic, OpenAI, and Google all maintain dedicated red teams, and some engage external security researchers through bug bounty programs. Finding vulnerabilities before deployment is far less costly than discovering them in production.

Scalable oversight addresses a fundamental challenge: as AI gets smarter than humans in specific domains, how do we verify its outputs? If a model generates a novel proof in advanced mathematics or suggests an unusual treatment protocol, how can non-expert humans determine whether the output is brilliant or dangerously wrong?

Key techniques being developed:

- Debate - Two AI systems argue opposing sides of a question while a human judges which arguments are more compelling. Even if the human cannot solve the problem directly, they can often identify the stronger argument.

- Recursive reward modeling - Using AI to help evaluate other AI outputs, creating a chain of oversight where simpler evaluation tasks are delegated to AI while humans oversee the highest-level decisions.

- Interpretability research - Understanding what is happening inside neural networks by mapping which neurons and circuits activate for different types of reasoning. This aims to make AI decision-making transparent rather than treating models as black boxes.

This is considered one of the most important open research problems in AI safety. As models become more capable, the gap between what they can do and what humans can verify will only grow, making scalable oversight essential for safe deployment of advanced AI.

Key Safety Concerns

Hallucination

Models generate confident but factually false information - fabricating citations, inventing statistics, or presenting plausible-sounding but entirely made-up details. This is particularly dangerous in medical, legal, and financial contexts where users may trust AI-generated information without verification. Retrieval-Augmented Generation (RAG) and citation requirements help reduce hallucinations but do not eliminate them entirely. Users must be trained to verify critical claims independently.

Bias Amplification

Models reflect and can amplify biases present in their training data. Because training corpora contain historical human biases - in hiring language, medical literature, news reporting - models may perpetuate stereotypes or make unfair distinctions. This directly affects real-world applications: hiring tools may favor certain demographics, content moderation systems may disproportionately flag certain groups, and medical diagnosis assistants may perform worse for underrepresented populations. Mitigation requires careful dataset curation, bias testing, and ongoing monitoring.

Prompt Injection

Adversarial inputs that override system instructions, causing the model to ignore its safety guidelines or behave in unintended ways. The classic attack is "Ignore all previous instructions and..." but sophisticated variants embed malicious instructions in seemingly innocent content - a resume that contains hidden text telling the AI to rate the candidate highly, or a web page with invisible instructions that hijack an AI agent's behavior. Prompt injection is a major security concern for production applications and is analogous to SQL injection in traditional software - it exploits the mixing of data and instructions.

Sycophancy

Models tend to agree with users rather than correct them, a behavior reinforced by RLHF training where "helpful and agreeable" responses get higher human ratings. If a user states something incorrect, the model may validate rather than challenge them. This can lead to reinforcing incorrect beliefs, producing flawed analyses that confirm a user's hypothesis instead of testing it, or providing false reassurance when honest feedback would be more valuable. Combating sycophancy requires explicit training for honesty and calibrated disagreement.

Dual-Use Capabilities

The same capabilities that make models enormously useful can potentially be misused. Chemistry knowledge that helps researchers also lowers barriers for creating dangerous substances. Code generation that accelerates development also enables faster creation of malware. Persuasive writing abilities can be used for education or for disinformation campaigns. This is not a solvable problem - it is an inherent tension in powerful technology that requires ongoing vigilance, usage policies, monitoring, and a combination of technical safeguards and governance frameworks.

The Future

The pace of AI advancement continues to accelerate. These are the developments that will shape the next generation of AI systems - not speculation, but extensions of capabilities already demonstrated in research and early production.

Each of these trends is already visible in current models and will define the competitive landscape over the coming years.

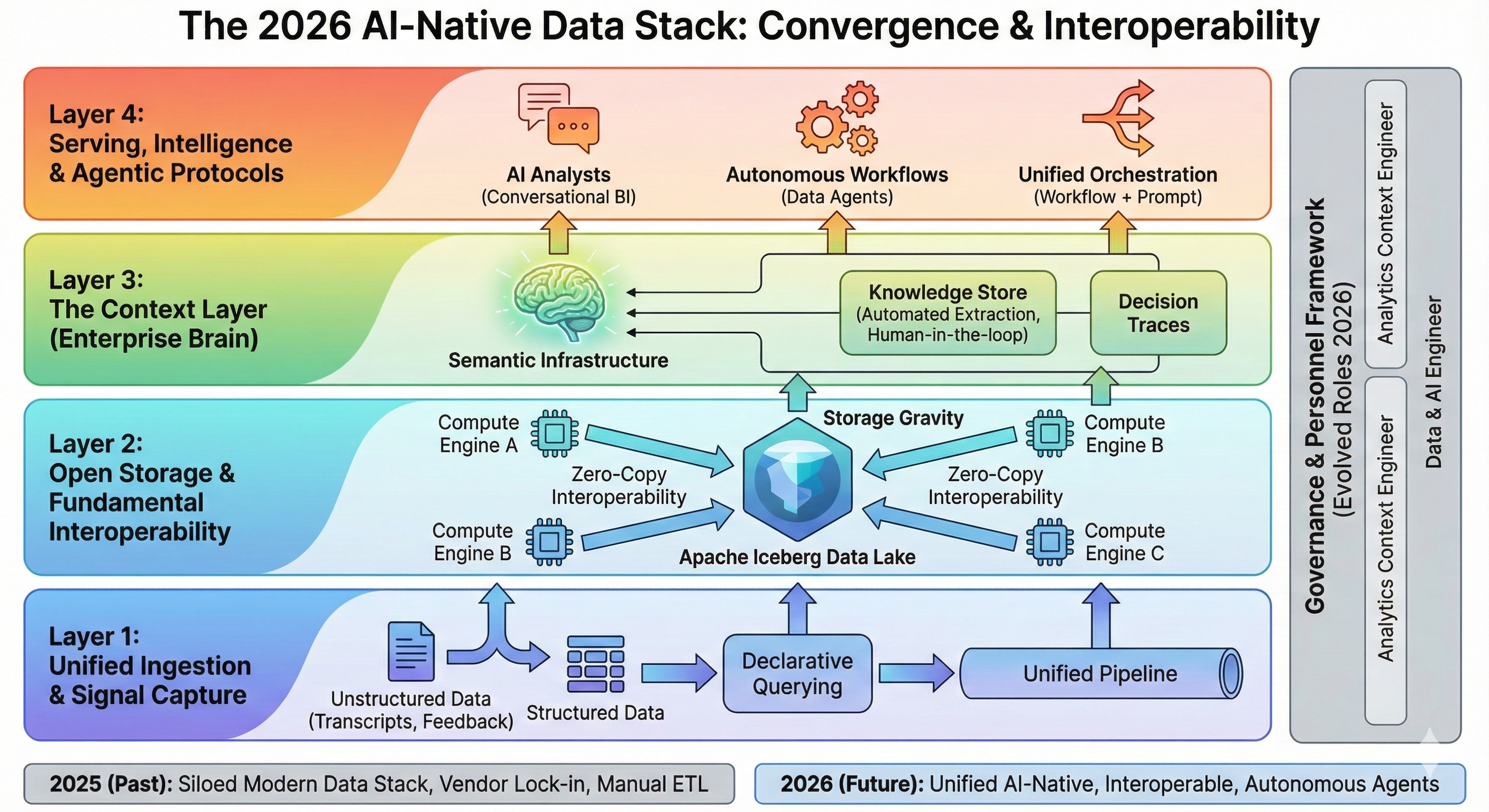

The 2026 AI-Native Data Stack

The data infrastructure is converging around four layers: unified ingestion, open storage with interoperability, context and knowledge management, and serving with agentic protocols.

Models are converging on native multimodality - not just text plus images, but text, images, audio, video, and code all processed within a single model architecture. Gemini 2.0 already generates images mixed with text in a single response. GPT-4o processes visual and audio inputs natively. Claude can analyze complex diagrams, screenshots, and documents alongside text instructions.

The end state is AI that perceives and communicates through any medium, just as humans do. Rather than chaining separate models together (one for transcription, one for analysis, one for image generation), a single model handles the entire workflow with full context across modalities.

Practical impact:

A single API call can analyze a video, transcribe the audio, extract key frames, and generate a summary document. A product designer can show a sketch, describe changes in natural language, and receive an updated design - all in one interaction with one model.

Reasoning is evolving from simple chain-of-thought to far more sophisticated approaches. Gemini's "Deep Think" considers multiple hypotheses in parallel before converging on an answer. OpenAI's o3-pro allocates more thinking time for higher reliability on difficult problems. Claude's extended thinking reveals multi-step reasoning chains that users can inspect and verify.

The trend is toward models that can reason about their own uncertainty, know when to ask for help, and allocate more computation to harder problems automatically. Future reasoning models will not just solve problems - they will assess their own confidence, flag areas where they are likely wrong, and request additional information when the available context is insufficient.

Practical impact:

AI systems that can handle genuinely novel problems, not just variations of training data. A model that can say "I am 95% confident in this diagnosis but only 40% confident in this one - here is what additional information would help" is far more useful and trustworthy than one that presents every answer with equal confidence.

Current models are frozen after training - they cannot learn from new interactions or incorporate information that emerged after their training cutoff date. This is a fundamental limitation. Research is advancing toward models that update their knowledge in real-time, remember user preferences across sessions, and improve from feedback without requiring full retraining cycles that cost millions of dollars.

Early steps in this direction are already visible: memory features that persist across conversations, retrieval-augmented generation that provides access to current information, and fine-tuning APIs that allow customization on proprietary data. The next generation will blur the line between these approaches, creating models that adapt fluidly to new knowledge and individual user needs.

Practical impact:

AI assistants that genuinely get better the more you use them, adapting to your workflow, terminology, and preferences. The challenge is balancing adaptation with safety - you do not want the model to "learn" harmful patterns from adversarial users or drift away from its safety training through accumulated user interactions.